Confirmation Bias

The silent tendency to seek, remember, and believe information that agrees with you — and to dismiss, forget, or argue with everything else. The most pervasive reasoning error in the human mind.

In 1960, a young British psychologist named Peter Wason gave his students a deceptively simple puzzle. He showed them three numbers — 2, 4, 6 — and told them these numbers followed a rule he had in mind. Their job was to figure out the rule by proposing their own triples. He would tell them "yes, that fits my rule" or "no, it doesn't." When they were confident, they could announce the rule.

Almost every student went about it the same way. They'd propose 8, 10, 12. "Yes." They'd try 20, 22, 24. "Yes." They'd try 100, 102, 104. "Yes." Confident, they'd announce: the rule is "three consecutive even numbers ascending by two." Wrong. The rule, as it turned out, was simply "any three numbers in ascending order." 8, 10, 12 fits. So does 1, 2, 3. So does 5, 847, 1000.

The students didn't find the rule because they only ever tested triples they expected to fit. They never tried 1, 2, 3 — which would have immediately shown their hypothesis was too narrow. They never tried 5, 4, 3 — which would have told them direction mattered. They were hunting for confirmation, not testing for disconfirmation, and so they confidently announced wrong answers. Wason called this pattern confirmation bias, and over the next sixty years it became arguably the single most replicated finding in cognitive psychology.

It is also the reason two smart people can read the same news, watch the same debate, sit through the same meeting, and walk away more convinced of opposite positions than when they started. This essay is about how that happens, why the mind is built this way, and what you can actually do about it.

What confirmation bias actually is

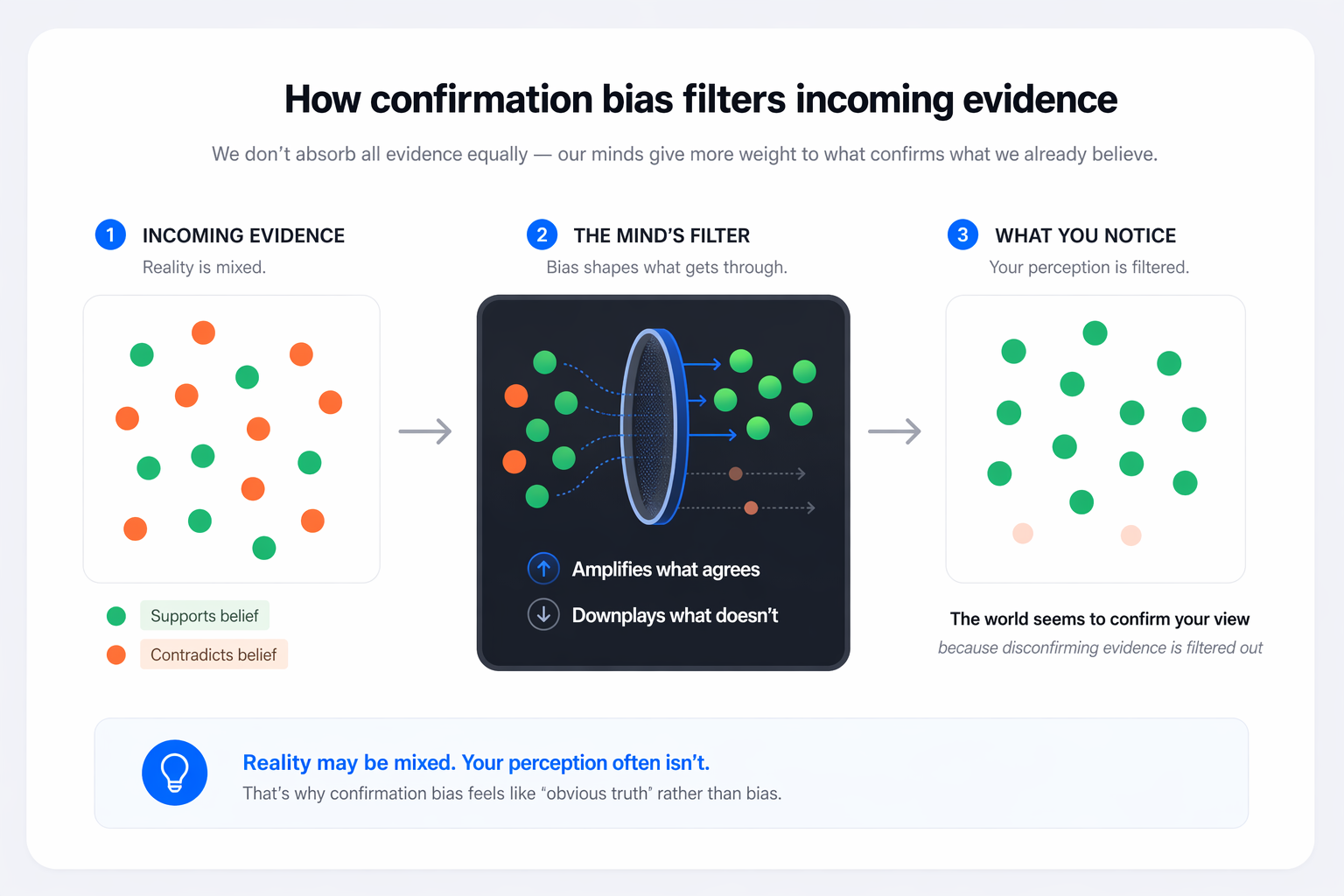

The textbook definition: confirmation bias is the tendency to search for, interpret, favor, and recall information in a way that confirms one's pre-existing beliefs. It's an umbrella term covering several related patterns, but they all share a common shape — the mind treats evidence that supports what we already think as credible and important, and evidence that contradicts it as weak, irrelevant, or suspect.

It's important to be clear about what confirmation bias is not. It's not lying. It's not stupidity. It's not a character flaw limited to people on the other political team. It is a feature of how ordinary, intelligent human minds process information, and everyone — scientists, doctors, judges, CEOs, you, me — exhibits it reliably under experimental conditions. It is a default operating mode of cognition, and staying out of it requires active, deliberate effort.

The cruel part is that confirmation bias feels exactly like good reasoning from the inside. When you dismiss an article because its methodology seems weak, you genuinely believe you're being rigorous. When you find a new piece of evidence compelling, you genuinely believe you're being open-minded. And sometimes you are. The problem is that the rigor and the open-mindedness apply asymmetrically — tighter standards for evidence against your view, looser standards for evidence for it — and you can't feel the asymmetry. It's invisible from the inside, in the same way that a colorblind person doesn't experience their world as missing a color.

The Wason experiments

Wason's 2-4-6 task was only the first of a series of experiments that made his name. A few years later he produced an even sharper version — the Wason selection task — which remains one of the most studied problems in the history of psychology. It goes like this:

The Wason Selection Task

Four cards on a table

Each card has a letter on one side and a number on the other. The four cards show: E, K, 4, 7. I claim the following rule is true: "If a card has a vowel on one side, it has an even number on the other side." Which cards must you turn over to test whether my rule is true or false?

Take a moment. Most people answer "E and 4." Some answer "E only." Both are wrong.

The logic is ruthless once you see it. The rule says "if vowel, then even." To disprove it, you need to find a vowel with an odd number — which means flipping E (to check for an odd on its back) and 7 (to check for a vowel on its back). The 4 is irrelevant: whether it has a vowel or consonant on its back, neither case can disprove the rule (the rule doesn't say consonants can't have even numbers). Yet over 90% of educated adults pick 4 anyway — they're searching for a card that could confirm the rule rather than one that could break it.

This is confirmation bias in its purest laboratory form. People don't fail the task because they're bad at logic. They fail because the mind reflexively turns "test this rule" into "find support for this rule" — two very different operations that feel identical from the inside. Disconfirmation is how science, engineering, and law actually work. Confirmation is how cognition naturally works. The gap between them is where most reasoning errors live.

Three flavors of the bias

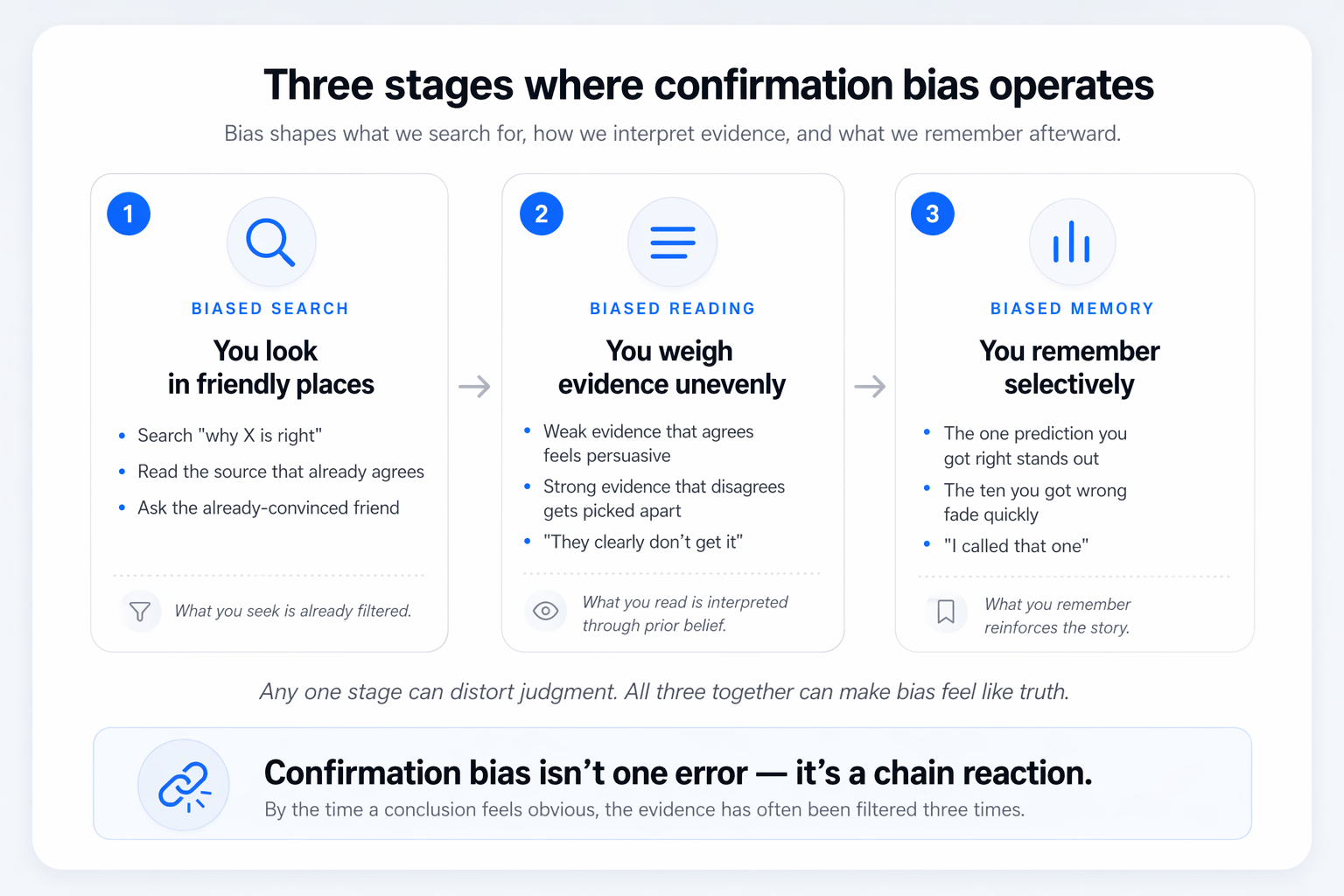

Researchers typically break confirmation bias into three distinct but related patterns, each operating at a different stage of thinking. Understanding them separately is useful, because the countermeasures for each are different.

The first, biased search, is what happens before you've even started evaluating evidence. You Google "why X is true" rather than "why X is false." You read the newsletter that shares your priors rather than the one that doesn't. You ask colleagues who already agree with you. The information environment you end up in is one you constructed — often unconsciously — to reinforce what you already believe. The modern algorithmic internet amplifies this catastrophically, since every platform is engineered to show you more of what you engaged with yesterday.

The second, biased interpretation, is the asymmetric-standards effect. When you encounter evidence that supports your view, you accept it fairly casually. When you encounter evidence against, you apply stricter scrutiny: the methodology, the sample size, the author's credentials, the framing — all get examined. Both standards are, in isolation, reasonable. The problem is applying them only to one side. A classic 1979 Stanford study by Lord, Ross, and Lepper showed this with terrifying clarity: people shown identical studies on capital punishment rated the methodology as sound when the study agreed with them, and flawed when it didn't — the same studies, with their conclusions swapped.

The third, biased memory, operates after the fact. You remember the prediction you got right and forget the nine you got wrong. You remember the time your hunch paid off and forget the times it didn't. You remember the article that vindicated your view and forget the one that challenged it. Over time, your memory becomes a curated museum of your own correctness, which feeds confidence that further fuels the first two effects.

Why the brain works this way

If confirmation bias is so destructive, why is the brain built to do it? The honest answer is that it's not a pure bug — it's a feature that pays dividends in some environments and exacts steep costs in others.

One leading explanation, developed by psychologists Hugo Mercier and Dan Sperber in their argumentative theory of reasoning, is that human reasoning evolved primarily not to find truth but to win arguments. Our ancestors survived in small groups where persuading allies and winning disputes mattered enormously. A mind built to efficiently generate supporting arguments for whatever position it's defending is, in that environment, highly adaptive. A mind built to impartially weigh evidence on all sides is slower, more indecisive, and easier to out-argue.

This theory explains something otherwise puzzling: people are often much better at spotting flaws in other people's reasoning than in their own. That's exactly what you'd expect from a mind evolved for arguing — offense-optimized, defense-optimized, with a large blind spot where self-critique should go. Confirmation bias isn't a glitch in the system; in the original environment, it was the system.

The evolutionary logic

A mind that efficiently defends its own positions and attacks others' is well-suited for group politics. A mind that impartially weighs truth is well-suited for science. We evolved the first. We have to deliberately construct the second.

There's also a simpler, more mechanical explanation. The brain is a prediction engine. It constantly builds models of the world and updates them based on incoming data. But updating a model is expensive — cognitively, emotionally, socially. Confirming an existing model is cheap. Given a choice between cheap-and-familiar and expensive-and-disruptive, the brain's default energy-saving behavior is to interpret ambiguous data as confirming rather than disconfirming. Most of the time, this works fine. The times it doesn't are the ones this essay is about.

Science: the replication crisis

Science is often held up as the antidote to confirmation bias — an institution specifically designed to force disconfirmation. In theory, this is true. In practice, scientists are human beings, and the last fifteen years of psychological and biomedical research have revealed how deeply confirmation bias shapes even rigorous scientific work.

Starting around 2011, a wave of large-scale replication projects began trying to reproduce landmark findings in psychology. The results were sobering. Depending on the field and the study, between 40% and 70% of published findings failed to replicate — meaning that when other labs ran the same experiment, they didn't get the same results. Some of this was statistical noise. But a significant portion traced back to researchers' confirmation bias shaping the original work: selectively reporting analyses that produced significant results, reframing hypotheses after seeing the data, stopping data collection when the numbers looked good, and the now-notorious practice of p-hacking — running many variations of an analysis until one produced the "right" answer.

None of these researchers were fabricating data. Most weren't even consciously cheating. They were running their experiments, looking at the results, and — through a thousand small unconscious decisions about which analyses to emphasize and which to drop — producing findings that confirmed their hypotheses. The scientific community's response has been instructive: pre-registration of hypotheses (lock in your predictions before seeing the data), adversarial collaborations (pair skeptics with believers to run joint studies), and large-scale replication consortia (many labs test the same claim independently). All of these are, in effect, institutional countermeasures against confirmation bias.

Medicine and misdiagnosis

In medicine, confirmation bias has a specific name: diagnostic momentum. A doctor forms an initial hypothesis about what's wrong with a patient — often within the first minute of the encounter — and then subsequent information tends to be interpreted in light of that initial guess. Symptoms that fit get noticed; symptoms that don't get explained away or missed entirely. By the time specialist consults and test results come in, the initial label has often calcified, and correcting it becomes surprisingly difficult.

Research on diagnostic error consistently finds that the majority of misdiagnoses involve cognitive factors, and confirmation bias is among the most frequent. In one study, physicians given a plausible but incorrect initial diagnosis continued to favor it even after being shown test results that, in a controlled context, would have pointed clearly elsewhere. The anchoring was remarkably stable, and the physicians' confidence in their (incorrect) diagnosis was often high.

When the chart tells you what to see

A 40-year-old woman arrives at an emergency department with chest pain, labeled in the referral note as "anxiety-related." The ED physician reads the note, observes the patient appears anxious, and orders a reassuring workup. She is discharged with a prescription for anti-anxiety medication. Six hours later, she returns in cardiogenic shock from an ongoing heart attack that had been missed.

No individual step was incompetent. The initial label anchored perception; the anchoring filtered which findings got emphasized; the filtering justified the initial label. Diagnostic momentum is confirmation bias in a white coat, and it kills people.

The best hospitals now build explicit countermeasures into clinical workflow. Structured diagnostic checklists force clinicians to consider alternatives. "Diagnostic timeouts" — brief pauses before committing to a final diagnosis, during which the doctor asks "what else could this be?" — have been shown to reduce error rates. These aren't expensive interventions. They work because they insert, into a system that would otherwise run on confirmation-biased intuition, a deliberate moment of disconfirmation.

Politics, polarization, and the news

Nowhere is confirmation bias more visible — or more consequential — than in political life. Two neighbors with access to the same facts can reach opposite conclusions about nearly any contested issue, and each will be genuinely certain that the other is being irrational. Both, from their own perspectives, are right: each is reasoning carefully from the evidence they've seen. The trouble is they've seen different evidence, and each has filtered what they've seen through different priors.

The information environment has made this enormously worse. Every major platform — search engines, social feeds, news aggregators, video recommendations — is optimized for engagement, and engagement correlates heavily with content that confirms what users already believe. This produces what Eli Pariser named the filter bubble: an information diet personalized to each user such that challenging views become progressively harder to encounter. Over time, even a user who tries to stay balanced will find that the system serves them a world confirming their priors, because that world holds their attention better.

It is difficult to get a man to understand something when his salary depends on his not understanding it.

— Upton SinclairThe effect compounds. As your information diet becomes more uniform, your model of what "most reasonable people think" drifts toward your own views, because most of the people you encounter seem to agree. You then interpret disagreement as a sign of extremism or bad faith rather than as a genuine alternative perspective, which makes you less likely to engage with it seriously — which further homogenizes your inputs. This is one of the mechanisms behind political polarization not just of opinion but of perceived reality: people in different bubbles increasingly disagree not only about what to do but about what is happening.

The uncomfortable implication is that feeling certain your political opponents are stupid or evil is a near-perfect signal that confirmation bias is operating on you. Smart, decent people reach conclusions you disagree with for reasons that make sense to them given the evidence they've encountered. If they seem to you to be reasoning from an alien premise, it's possibly because their premise is alien — they've been shown a different world than you have. This doesn't mean all views are equally valid. It means that the feeling "I obviously see the truth and they obviously don't" is almost always wrong, regardless of which side is feeling it.

Everyday decisions

The political examples are dramatic, but most of the damage confirmation bias does in an ordinary life is subtler. It shapes decisions you don't even realize you're making.

When you're considering whether to stay in a relationship, a job, or a city, confirmation bias tilts your weighting of daily evidence. If you think the relationship is working, you notice the good days and rationalize the bad. If you've decided it isn't, you notice the friction and forget the ease. The underlying reality may not have changed — your interpretation of the same experiences has. This is why relationship counselors often ask clients to keep a log of specific incidents: memory alone is too easily rewritten by whichever story currently feels true.

Investment decisions show it sharply. Investors who buy a stock almost always read more news about that stock afterward, and the news they seek out tends to be positive or explanatory of recent price action. Losing positions feel like temporary setbacks ("the market doesn't understand yet"); winning positions feel like confirmations of skill. Thousands of studies have shown that this pattern produces worse returns than simple index investing, but the feedback loop of self-confirmation is powerful enough to keep most individual investors trading anyway.

And it pervades hiring. An interviewer forms a gut impression of a candidate in the first two minutes — research suggests often in the first thirty seconds — and then the remainder of the interview tends to confirm that initial read. If the first impression was positive, the candidate's ambiguous answers get interpreted charitably. If negative, the same answers read as evasive or weak. Structured interviews with consistent questions and blind scoring partly correct this, which is why companies that adopt them see measurably better hiring outcomes. But most interviews still run the intuitive way, and most hires are still partly confirmation of a 30-second impression.

How to counter it

Confirmation bias can't be eliminated. It's too deeply wired, too cheap, too natural. What it can be is interrupted — through deliberate habits and institutional structures that force the mind to do what it wouldn't do spontaneously.

Practical countermeasures

Ask "how could I be wrong?" before concluding

The single highest-leverage habit. Before committing to a belief or decision, spend five minutes generating reasons you might be mistaken. Not "are there any reasons" — generate them. This activates the disconfirmation mode the Wason experiments showed people skip by default.

Steelman the opposing view

The opposite of a straw man: rebuild the other side's argument in its strongest form, one that addresses your best objections. If you can't produce a version a thoughtful opponent would recognize as fair, you don't understand the position yet — you're arguing with a simplified caricature.

Define disconfirming evidence in advance

Before gathering data, specify: "What specific finding would change my mind?" If nothing you can imagine would update your view, you're not reasoning — you're defending. Writing it down removes your ability to goalpost-shift later.

Seek out thoughtful disagreement

Curate your information diet with at least a few sources whose views you'd naturally push back against but whose reasoning you respect. Not to convert you — to prevent your default inputs from being a closed loop. Treat disagreement as a resource, not a threat.

Keep a track record

Write down your predictions with dates. Review them quarterly. Biased memory will tell you you've been mostly right; a log will tell you the truth. Calibration improves only when you confront the record, which is uncomfortable and why almost nobody does it.

Build institutional checks

For team decisions, structure disagreement in. Assign someone to argue the opposite. Run premortems. Use blind reviews. Pre-register predictions. Individual willpower is not enough — design the process so disconfirmation is built into the workflow, not left to virtue.

The principle, restated

Your mind is a confirmation engine running silently in the background. You can't turn it off, but you can interrupt it — by deliberately seeking disconfirmation, steelmanning the opposition, and designing processes that surface what your default cognition filters out. The goal isn't to have no priors. It's to hold them loosely enough that reality can still reach you.

Peter Wason's 2-4-6 task is now sixty-five years old, and the finding has replicated more times than almost anything in psychology: people, given a rule to test, hunt for confirmation rather than disconfirmation. They announce wrong answers confidently because their methodology quietly guaranteed they'd never find the flaw. The puzzle is a cartoon, but the pattern it reveals runs through everything. The scientist running the experiment. The doctor reading the chart. The investor reading the news. The voter watching the debate. The person sitting across from you right now, you, reading this sentence and wondering which of them the description best fits.

That last reflex — the instinct that confirmation bias is mostly other people's problem — is itself confirmation bias. It's the bias protecting itself. The first real step out isn't learning to spot the bias in others. It's accepting, as a settled matter, that it is operating on you right now, on the views you are most confident are simply correct, and that the feeling of obvious correctness is precisely the sensation the bias produces. Everything after that is work. But at least it's work you can actually do.