10 AI Skills That Will Decide Your Career in 2026

AI won't take your job. A knowledge worker who's better at using AI will. Here are the ten skills that separate the people getting promoted from the people getting quietly replaced — and how to build each one this year.

The line you've heard for three years — "AI won't take your job, a person using AI will" — is technically true and strategically useless. It tells you the threat exists. It doesn't tell you what to do.

What's actually happening inside knowledge-work teams in 2026 is more specific. The gap between the top 10% of operators and the median operator has widened to something most companies haven't fully priced in yet. A senior analyst with strong AI skills is now producing the work of three analysts. A marketing manager who knows how to orchestrate AI workflows is shipping campaigns that used to require an agency. A lawyer using LLMs well is reviewing contracts at 4x speed with fewer errors.

The people doing this aren't smarter. They've built a specific stack of skills — partly technical, partly cognitive, partly editorial — that compounds over time. The people who haven't are still doing 2022 work in 2026, and their reviews are starting to reflect it.

AI didn't change what knowledge workers are paid for. It changed how the work gets done — and the people who adapted first are now uncatchable.

This is the playbook. Ten skills, ranked roughly by how transferable and durable they are. Not tools — skills. The tools will change. The skills won't.

1. Prompting as a thinking discipline

The first skill is the one most people learn badly. They treat prompting as command-typing — "summarise this," "write me an email" — and conclude AI is mediocre when the output is mediocre. The output is mediocre because the prompt was.

Real prompting is closer to briefing a smart contractor. You provide context (who you are, what you're working on), specify the format (length, tone, structure), define the audience, give examples of what good looks like, and explain the constraints. A 50-word prompt will almost always outperform a 5-word prompt by a factor that's hard to overstate.

The deeper skill underneath: knowing what you want clearly enough to describe it. Most bad prompts come from unclear thinking, not bad prompting technique.

2. Editing AI output with strong taste

The second skill is the one that quietly separates the top tier from everyone else. AI generates fluent, plausible-sounding work at infinite volume. Most of it is average. The differentiator isn't generation — it's editorial judgement.

Knowledge workers who win in 2026 know how to read AI output and immediately spot:

- The places it hedged when it should have committed

- The clichés it reached for instead of the specific

- The structural problems hiding under decent prose

- The factual claims that need verification

This is taste. It's slow to build but extraordinarily durable. The professional who can take an AI draft from "fine" to "excellent" in 15 minutes is doing the work of someone who used to spend three hours from scratch — and the output is often better.

3. Workflow design and orchestration

| Era | What workflow design looked like |

|---|---|

| 2022 | Manual handoffs, copy-paste between tools |

| 2024 | Single-tool automations (Zapier, Make) |

| 2026 | Multi-step AI workflows with conditional logic, agents, human review checkpoints |

The skill is no longer "I know how to use ChatGPT." It's "I can design a 7-step workflow that pulls data from three sources, drafts in AI, routes to a human for review, and ships to the destination — and I can debug it when one step fails."

This is genuinely new work. It blends product thinking, light technical fluency, and operational sense. The people doing it well in 2026 didn't come from engineering — they came from operations, project management, and analyst roles, and they learned to build because the tools finally let them.

4. Verification and source-checking

LLMs hallucinate. They will continue to hallucinate. The hallucination rate has dropped meaningfully since 2023 but it has not gone to zero, and it never will — because language models predict, they don't know.

The skill is treating every AI claim — citations, statistics, names, dates, technical specifics — as provisional until verified. The professionals who will be trusted with consequential work in 2026 are the ones who can move fast with AI and maintain a verification reflex strong enough that wrong information doesn't ship. The combination is rare, and it's compensated accordingly.

A useful habit: when AI gives you a fact you'll cite externally, ask it to provide its source, then check the source actually says what it claims. This takes 30 seconds and catches roughly 90% of hallucinations.

5. Knowing when not to use AI

The most underrated skill on the list. AI is genuinely bad — or at least suboptimal — for several common situations:

When to skip the AI:

- Genuine creative breakthroughs. AI is excellent at variations on the known. It's poor at original synthesis. If you need a real new idea, write the first draft yourself.

- High-stakes interpersonal communication. Difficult feedback, condolences, sensitive negotiations. AI-generated versions read as AI-generated, and people can tell.

- Decisions that require your judgement. Don't ask AI what you should do. Ask it to help you think through the options. The decision must remain yours.

- Anything that's faster to do yourself. Three-line emails, simple lookups, quick formatting. The AI overhead exceeds the saving.

The professional who knows the boundary is more productive than the one who tries to AI everything — because the AI-everything person spends a third of their day prompting and editing for tasks that didn't need it.

6. Context engineering: building the AI's working memory

By 2026, the highest-leverage AI users aren't the ones with the best prompts. They're the ones with the best context — the systems, documents, and reference material the AI works against.

This means:

- A personal knowledge base (Notion, Obsidian, or similar) the AI can reference for your projects, preferences, voice, and history

- Reusable prompt templates for recurring tasks (weekly reports, candidate evaluations, meeting prep)

- A library of examples of "good" output for the work you do most

- Style guides, brand voice docs, decision frameworks captured in formats AI can ingest

This is genuinely new work. It's the digital equivalent of organising your tools before a job. The people who invest in it spend less time prompting and get better output, because the AI starts from a much richer baseline of context every time.

7. Using AI as a thinking partner, not just an output machine

Most knowledge workers use AI to produce things — drafts, summaries, code, slides. The top tier uses it to think — to argue against their own ideas, to surface assumptions they're making, to stress-test plans before they ship.

The skill is conversational rather than transactional. Instead of "write me an email," it looks like:

- "Here's my plan for the campaign. What's the strongest argument against it?"

- "I'm choosing between these two job offers. Walk me through the tradeoffs I might be missing."

- "Critique this strategy doc as if you were our skeptical board member."

Done well, this is closer to having a 24/7 sparring partner than a writing assistant. It compounds your judgement instead of replacing it. And it's the use case most people miss entirely because it doesn't show up in productivity benchmarks.

8. Domain depth — the part AI makes more valuable, not less

Counterintuitively, AI has made deep domain expertise more valuable, not less. Here's why: when AI can produce a fluent first draft of anything, the bottleneck shifts to whoever can spot what's wrong with it. That requires real expertise.

A junior marketer using AI looks productive but produces forgettable work — because they can't tell what's missing. A senior marketer using AI is genuinely formidable — because they can read a draft and instantly identify the strategic gap, the wrong reference, the buried lede.

The implication: don't abandon depth in pursuit of AI breadth. Build a domain you genuinely understand — finance, design, law, healthcare, marketing, whatever — and use AI to extend that depth. The combination beats either one alone, by a lot.

9. Continuous re-learning as a default mode

The half-life of AI tooling knowledge is now around 6–9 months. The model you mastered last year is two generations behind. The workflow you built six months ago has a better version available now. The frontier moves fast enough that "I learned AI" is a meaningless statement — the question is what you've learned this quarter.

The skill isn't intelligence. It's time and attention budget. The professionals staying current have built habits:

- 30 minutes a week trying a new tool or workflow

- A small handful of trusted sources (newsletters, podcasts, practitioners) for signal

- A practice of rebuilding their own workflows every 3–6 months instead of letting them ossify

- A willingness to throw away what they learned last year if the new thing is genuinely better

This isn't optional. The compound interest on staying current — or falling behind — over five years is brutal in both directions.

10. Strategic narrative: explaining what you do, in human terms

The final skill is the one most knowledge workers underrate, and it's becoming decisive. As AI handles more of the production work, what's left for humans is increasingly: framing the problem, defining what success looks like, communicating the result to other humans, and building trust.

This is narrative work. It looks like:

- Writing a clear strategy memo that aligns a team around a direction

- Presenting findings in a way that lands with executives who haven't done the analysis

- Translating between technical specialists and business stakeholders

- Telling the story of why this work matters

In 2026, the analyst who can run AI workflows AND walk a CEO through the implications in 10 minutes is on a different career trajectory than the one who can only do the first half. The skill is rhetorical, editorial, and strategic — and it's recession-proof in a way that pure technical AI skill isn't.

The infrastructure of an AI-fluent professional

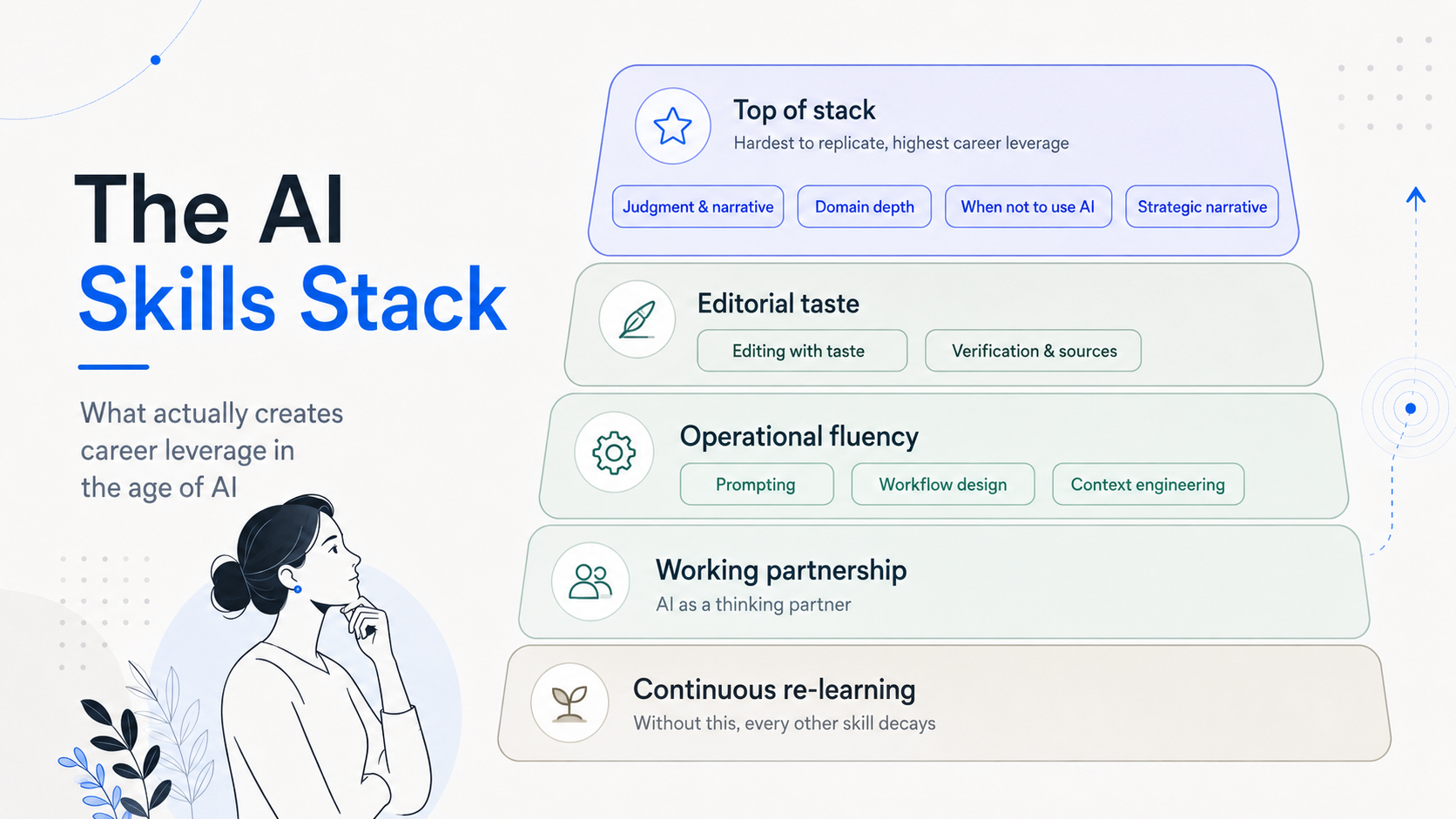

Stack these skills and you get something coherent. Here's how the layers fit together:

The foundation layer is the one most people skip. They try to learn skill #2 (editorial taste) without skill #9 (continuous re-learning) underneath it, and three months later they're using last year's tools to do this year's work.

The questions that reveal where you actually stand

Before deciding which of these to invest in, three questions cut through:

Which skill on this list would have the biggest impact on your work this quarter? Not the most interesting — the most leveraged. Pick that one. Spend 30 minutes a day on it for 30 days. The compound is real.

What's a task you do every week that AI could plausibly do better? That's your first workflow project. Build it badly, then improve it. The first version doesn't have to be good. It has to exist.

If your job were re-scoped tomorrow to assume AI fluency as a baseline, what would still be uniquely yours? That's your moat. Lean into it harder. AI doesn't replace what's uniquely yours — it amplifies it.

The professionals who'll be most valuable in 2026 aren't the ones who used AI the earliest. They're the ones who built durable skills around it — judgement, taste, narrative, depth — while everyone else was still treating it like a novelty.

The good news: most of these skills compound on what you already have. Domain expertise gets more valuable, not less. Editorial judgement matters more, not less. Communication skills, clear thinking, and the ability to learn fast — all of these go up in price.

The bad news: the gap is widening fast, and the people who haven't started building yet are losing ground every quarter. The right time to start was 2023. The second-best time is this week.